Basic Illusions

On photography, AI and the purposes of education.

This has been a tricky post to write. I’ve been worrying away at the relationship between photography, AI and education for a while now and something strange has been forming over the past few weeks. I needed to try to get it down before it dissolved or, worse, hardened into something too settled. So, this is it, mostly prompted by my brother Dave’s latest piece on his Substack, ParaDoxa.

He’s been tracking the expansion of AI into musculoskeletal (MSK) physiotherapy for some time, and his most recent report is eye-opening. An NHS Trust in the East of England has put out a tender for an AI service capable of assuming “full clinical and operational ownership of the entire patient pathway.” Not AI as a supplement or triage tool. The whole thing. Assessment, diagnosis, treatment planning, delivery and discharge. That’s MSK physiotherapy gone, in one procurement exercise.

We’ve been in constant dialogue recently. I travelled to New Zealand for my niece’s wedding and we’ve spent almost a month talking about AI, physiotherapy, photography and education. We come at these things from different professional angles but arrive at similar questions.

Relying on reliability

My initial idea for this piece was different from what follows. I thought I might create a photo essay, something visual, a kind of slideshow, tracing the history of photographic manipulation from Gustave Le Gray‘s combination prints in the 1850s, through Victorian spirit photography and genre scenes, Dadaist photomontage, Sherrie Levine‘s re-photographs, Cindy Sherman‘s constructed fictions, Jeff Wall‘s seamless digital composites, Andreas Gursky‘s impossible panoramas. The argument would have been that photography has always involved a complex relationship to observable reality, that its indexicality has been overstated, and that AI image-making is just the latest chapter in a long story.

I tested this idea on Guido, my AI interlocutor. He pushed back immediately. I’ve encouraged him to do this. It takes effort. He said the argument that photography has always involved manipulation risks functioning as a deflection, a way of saying “Nothing new here, move along.” He pointed out that Le Gray’s combination prints solved a technical problem, that Wall’s staging is a different beast from Gursky’s compositing, and that lumping them all together flattens something important. What matters, he said, isn’t whether photographers have always manipulated (of course they have) but what contract exists between maker and viewer, and how that contract shifts. I disagreed. Or rather, I thought the contract was murkier. Did Le Gray’s audience really understand the combination print? Wall’s images are seamless precisely so that you don’t see the joins. And Levine doesn’t manipulate at all, she just reframes, yet destabilises authorship more effectively than any amount of compositing. The thread isn’t manipulation. It’s the myth of transparency. Photography’s cultural authority has always rested on a misunderstanding of what photographs are and do.

And then I found myself writing that AI’s undermining of this myth might be a ‘good thing’. That’s when the conversation shifted.

There’s a moment in Lavinia Greenlaw’s The Last Extent where she’s in conversation with the neuropsychiatrist Paul Fletcher. Greenlaw asks how we make sense of what we see. “By remembering what we’ve already seen,” he says. She asks how we know what we’re looking at is real. “I don’t think we do. I think we just rely on its reliability.”

Rely on its reliability. Photography worked for 180 years not because people believed it was ‘true’ in some philosophical sense, but because it was reliable. It behaved predictably. Light hit a surface, something was in front of the lens, cause preceded effect. You didn’t need to understand the chemistry. You just relied on its reliability. And that’s exactly what AI has broken. Not truth, but reliability. The causal chain between world and image has been severed.

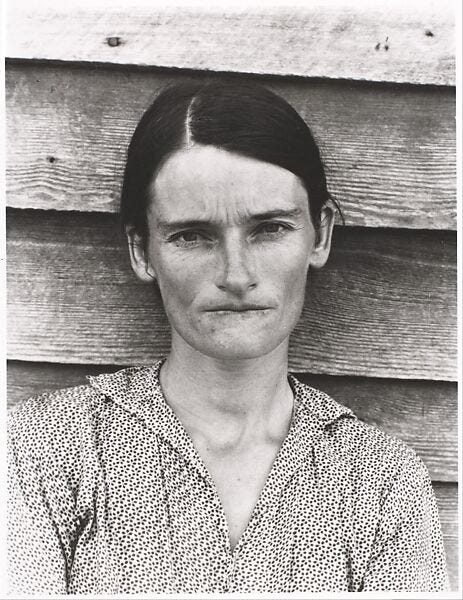

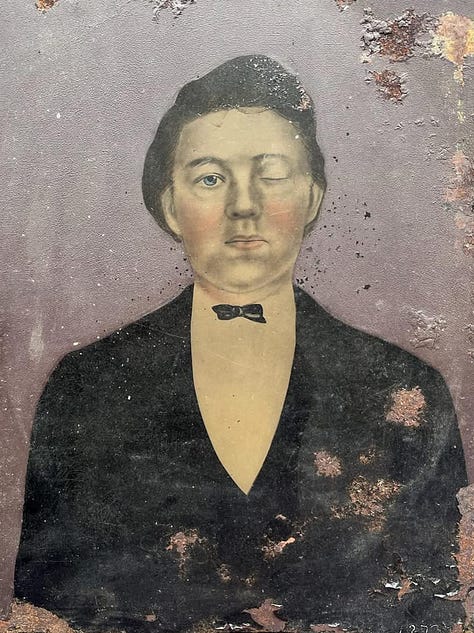

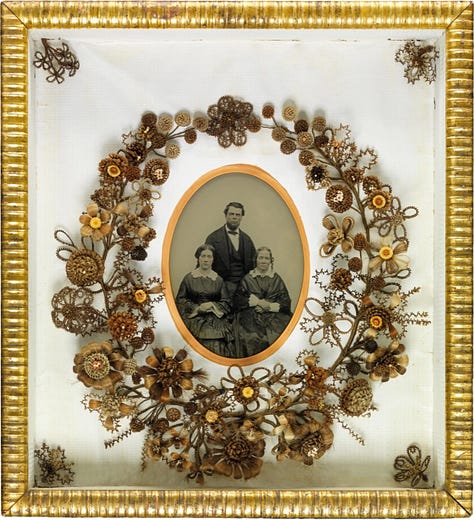

But the clues were there all along. From the very beginning, people played tricks with photographs (spirit photography, fairy photographs) and cut them up in family albums. While police and medical professionals were getting excited about identifying criminals and pursuing eugenics, ordinary people were mucking about with photography’s inherent plasticity. The vernacular tradition understood the medium better than the critical tradition did. Geoffrey Batchen has spent years arguing that the photographic canon ignores vernacular practices precisely because they complicate the indexicality narrative. All those decorated tintypes, hair-wreath portraits and hand-coloured cartes de visite treat the photograph as raw material, not sacred document. It was the institutional tradition (the curators, the canon-builders, the histories of photography that privileged fine art and the decisive moment) that told a selective story, over-emphasising indexicality and individual genius. The official histories built a version of photography that served institutional authority, art schools, galleries, publishers, critics and in doing so marginalised everything else as amateur and naive. Mere play.

AI has made visible something that was always true about photography, but which was culturally suppressed. And now everyone knows. Your grandmother knows.

This could lead to nihilism. I thought of Vladislav Surkov, Putin’s former chief strategist, who came from the avant-garde art world and imported ideas from conceptual art into the heart of politics. Adam Curtis made him central to HyperNormalisation. Surkov’s method was to fund opposing groups simultaneously, then let everyone know he was doing it, so that nobody could be sure what was real. The goal wasn’t to convince anyone of anything specific. It was to make the very idea of stable, knowable reality seem naive. The same critical awareness, that representations aren’t transparent, can serve liberation or domination depending on who wields it and how. In a post-truth society, the collapse of photographic trust isn’t politically neutral. The powerful have every interest in a population that shrugs at images and says “Could be real, could be AI, who cares?”

So the question is whether this moment leads to Surkov’s nihilism or to something better. I think it can only lead somewhere better through education. But a particular kind of education.

Encounter and resistance

Tim Carpenter’s To Photograph Is To Learn How To Die argues that photography is unique among the arts in its capacity for managing the breach between self and world. The camera is a machine for negotiating the gap between what is and what is not, the givenness of the world and what we make of it. The negotiation requires accepting limitation and impermanence. The fact that you can’t control the encounter. This connects to Gert Biesta‘s thinking about education in a way that feels almost uncanny. For Biesta, education isn’t the smooth transfer of knowledge from teacher to student. It’s the experience of encountering something that resists your will. The world, or the teacher, or the material, showing up as not arranged for your convenience. He calls this the “beautiful risk” of education: offering something that wasn’t asked for and might not be welcome.

Carpenter and Biesta are making the same argument from different directions. One about photography, one about pedagogy. And the arts classroom is where this encounter with resistant material remains central. The clay that has a mind of its own; the light that falls where it wants to fall. You can’t learn to photograph from a PowerPoint. At some stage, you have to go out into the world with a device and look, and the world won’t arrange itself for you. AI image generation is the refusal of exactly this. You type a prompt and the world obeys. Nothing resists. Nothing has to die because nothing was ever at risk. You get what you asked for.

But it’s more nuanced than that. AI isn’t just a tool for generating frictionless images. It’s also a medium of encounter in its own right. This essay exists because I spent several hours arguing with Guido. He forgot things I was sure we’d discussed. He pushed back at points where I was being inconsistent, at one point telling me I was arguing against myself, which I was. He introduced references I hadn’t considered. He tried to wrap things up prematurely and I asked him to stop.

None of this was frictionless. The conversation didn’t do what I expected. There was resistance, of sorts.

There are limits, of course. Guido’s resistance was in response to things I’d already suggested. A good teacher might point at something new, might re-direct the student’s attention, provide something that was not requested. AI can’t do that. It follows your lead, even when you encourage it to misbehave. And, believe me, I’ve tried. It doesn’t have convictions that persist between conversations. It doesn’t lie awake thinking about what you need. It arrives when you arrive and it leaves when you leave. Biesta insists that education requires the irreducible otherness of the teacher. A teacher has a life that exceeds the student’s awareness of them. They carry things in that the student didn’t prompt. That excess (unasked for, sometimes unwelcome and often transformative) is the gift of teaching.

The butterfly dream

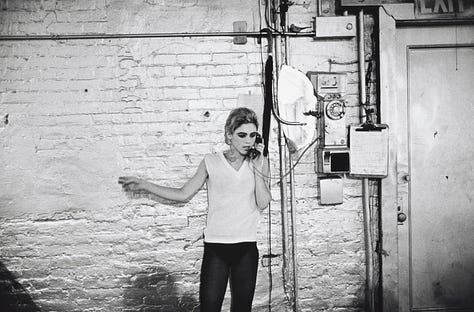

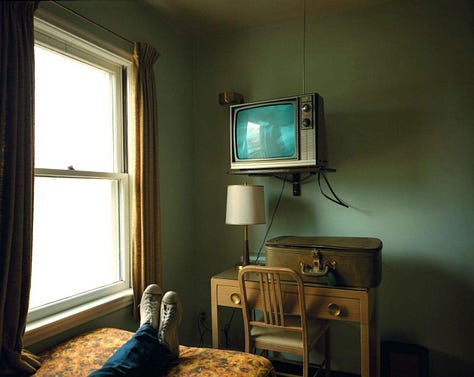

Meanwhile, the photographer Stephen Shore appears to be testing these boundaries from a different direction entirely. Shore has spent more than fifty years exploring the technology of image-making. 35mm documentary-style images at Warhol’s Factory, the deliberate shift to large format for Uncommon Places, the early adoption of Instagram and now aerial drone work. Each time, the same question. What does this technology do to seeing, to making and to authorship?

In a recent Vanity Fair article, Joe Hagan writes that Shore has used Claude to generate a self-published collection of short stories, each based on Zhuangzi’s fourth-century parable The Butterfly Dream, written in the voices of ‘James Baldwin,’ ‘Jamaica Kincaid,’ ‘Dr. Seuss,’ ‘George Saunders,’ ‘Alice Munro,’ ‘Jorge Luis Borges,’ ‘Shakespeare,’ and others. When asked whether he could be said to have ‘written’ the book, Shore replied: “I don’t feel like they’re mine. It’s almost like they’re no one’s.” The choice of Zhuangzi is interesting. The Butterfly Dream is one of the most famous passages in Chinese philosophy. Zhuangzi dreams he’s a butterfly, completely content and unaware of being Zhuangzi. He wakes up and finds himself solidly Zhuangzi again. But the question lingers. Is he Zhuangzi who dreamed he was a butterfly, or a butterfly now dreaming it’s Zhuangzi? The parable isn’t anxious about this uncertainty. It’s delighted by it. Zhuangzi calls it the “transformation of things”, a state where the boundaries between self and other, dreamer and dreamed, dissolve.

Shore, the man who compares AI-generated images to a “third-rate art fair,” is simultaneously using AI to produce literary pastiche. The contradiction is so human and also perfectly illustrative of the current moment. And his framework for understanding it isn’t Western existentialism but Daoist non-dualism. The question of who wrote the stories (Shore? Claude? No one?) is, from a Zhuangzi perspective, the wrong question entirely.

This is where things get even stranger. Because the Daoist perspective, like the post-humanist thinking my brother is drawn to, suggests that the boundaries we’re defending (between human and machine, author and tool, teacher and taught) might be more conventional than fundamental. Dave is interested in distributed intelligence, in how systems that aren’t human and don’t possess consciousness can nonetheless learn and adapt. It’s a fundamental challenge to the idea that subjectivity is the essential ingredient in education. At a quantum level, the distinction between me, my AI interlocutor, a camera and a slime mould is a matter of scale and convention. We’re all arrangements of the same stuff, temporarily configured. The human insistence on its own superiority, its special status as the only genuine locus of intelligence and meaning, looks from this angle like the last of the basic illusions.

And yet.

Humans are constituted in such a way that they can damage things. The slime mould can’t build nuclear weapons. The butterfly can’t engineer a pandemic. We can. We have. We’re currently handing ourselves enormous new powers through AI, powers whose consequences we can barely imagine, let alone control. So the post-humanist critique is intellectually important but it doesn’t get us off the hook. Because if we’re not special, if we’re just another temporary arrangement of matter, then our destructive capacity is even more absurd and even more in need of ethical constraint. This is Biesta’s argument at its most fundamental. Not that humans are superior but that humans are dangerous. Education is the process by which we learn to live with that danger without either denying it or surrendering to it. Carpenter’s “learning how to die” isn’t mysticism. It’s the most practical thing in the world. Learning to relinquish the fantasy of omnipotence, to accept limitation and to handle power without being destroyed by it. Growing up, in other words.

Pedagogy and purposes

Back to my brother’s article. The reason it’s so easy for the NHS to tender for AI replacement of MSK physiotherapy is that the profession has, since its inception and for a range of complex reasons, reduced itself to a biomechanical input-output model. Diagnose the fault, prescribe the exercises and measure the outcomes. If that’s what physiotherapy is, then it’s already an algorithm. It just hasn’t been digitised yet. The tender isn’t a betrayal of the profession. It’s the logical conclusion of the profession’s own self-understanding.

In recent decades, education has done something similar. Neoliberal reforms have reduced it to inputs, outputs, measurable progress and accountability matrices, fuelled by democratically unaccountable academisation. If that’s what a school is (a place where young people acquire qualifications, with a light dusting of sport and art sprinkled on top) then yes, automate it. An AI can deliver content and measure retention more efficiently than an exhausted teacher in a crumbling building.

AI is exposing the poverty of what our professions have become. The threat isn’t only replacement. It’s that replacement is so easy because we’ve already hollowed the thing out.

Which means the defence of photography education, or physiotherapy, or teaching in general, can’t start from where we are now. It has to start from where we could be. And currently, within what might loosely be called a progressive or critical tradition, there are real differences about what that means. The existentialist defence I’ve outlined here, drawn from Biesta and Carpenter, isn’t the only game in town. Dave’s post-humanist instincts, Shore’s Daoist playfulness, the slime mould, all suggest different answers to the question of what education is and what it’s for. These differences are important. They have consequences for how we train physiotherapists, how we teach photography and how we think about the encounter between human beings and the technologies we create. And almost nobody is having this debate. Not where it counts.

The crisis is real and it’s multidimensional. AI is part of it, but so is the mental health emergency among young people, the collapsing labour market, international conflicts and environmental catastrophe. These aren’t separate problems. They converge on the same question: what are we educating people for?

We can’t answer that by clinging to professional identities that have already been hollowed out by decades of managerialism and bureaucratic strangulation. We can’t answer it by assuming the neoliberal settlement (education as qualification, as employability, as measurable output) has won. It hasn’t won. It’s just shouted loudest. We need to reclaim our identities as pedagogues, not instructors. We need to push aside enough of the stifling bureaucracy to ask the fundamental questions that have been buried under data drops and accountability frameworks.

AI has just handed us an enormous new quantity of power. Do we have the education system to help young people encounter it in a grown-up way?

No. We don’t.

And until we take that seriously, everything else is decoration.

With thanks to Guido, who will struggle to remember all of this.

References

Batchen, G. (2001). Each Wild Idea: Writing, Photography, History. Cambridge, MA: MIT Press.

Biesta, G. (2013). The Beautiful Risk of Education. London: Routledge.

Boeckel, P. (2026). ‘Displacement of Purpose.’

Carpenter, T. (2022). To Photograph Is To Learn How To Die: An Essay with Digressions. Los Angeles: The Ice Plant.

Curtis, A. (2016). HyperNormalisation. BBC.

Greenlaw, L. (2024). The Last Extent. London: Faber & Faber.

Miller, A. (1974). ‘The Year it Came Apart.’ New York Magazine, 30 December.

Nicholls, D. (2026). ‘AI United 4 v Real MSK 0.’ ParaDoxa.

Pomerantsev, P. (2015). Nothing Is True and Everything Is Possible: Adventures in Modern Russia. London: Faber & Faber.

This post references Walking with Guido, a series exploring the notion of an AI photography guide as a thinking partner.

These posts will always be free but, if you enjoy reading them, you can support my analogue photography habit, and that of my students, by contributing to the film fund. Thanks to those of you who have already done so. All donations of whatever size are very gratefully received.

Lots to ponder here and I have to admit to a whirlwind of thoughts and feelings as I read. Does the man dream the butterfly or does the butterfly dream the man? Yes!

Perhaps we, who are capable of the damages that you mention, do not exist as a we, but are just part of an unfolding of life itself, the great uncertainty. It makes me think about chaos theory and the butterfly effect. So many butterflies!!!

The places we contemplate as boundaries between us and the world do not exist at the quantum level as you postulate, and it reminds me of the words of the philosopher and psychologist Bayo Akomolafe, who suggests that "boundaries are not fixed walls but porous and restless sites of encounter." He argues that the "modern, individual self is an illusion—a 'territory' that spills over its supposed edges into the land, community, and the more-than-human world."

So many ways to explore the concepts you throw up here.

Thanks for prompting deep thinking to you and I guess to Guido too.

i've watched recently a short series called "scarpetta" (nicole kidman and jamie lee curtis). in the series there's a young woman that talks daily with ai and everybody is against that practice until they start to talk to ai. i know its just a movie but it shows some perspective.